This post reports initial impressions of Apple MacOS Monterey running on Apple Silicon. The OS is solid and well behaved but, as usual, some applications need further refinement. This note summarizes what we have learned so far about the following areas.

- MacOS 12 Monterey itself

- MacOS 12 Monterey support applications

- Apple Applications like Photos and Music

- Apple Focus

I decided to write this post to capture some of my early misadventures with Monterey. Here we talk about some new features and review some old ones. To cut to the chase, Monterey is well sorted and ready for general use.

Revisions

- 2021-11-15 Initial

References

- MacOS Monterey 12.0.1

- Joe Kissell, Take Control of Monterey.alt concepts inc. San Diego CA, USA, 2021. ISBN 978-1-95-454609-7.

- Glenn Fleishman, Take Control of Your M-Series Mac, San Diego, CA, USA 2021. ISBN 978-1-95-45602-8

- https://support.1password.com/getting-started-mac/

Reference [1] is the OS release reviewed. Reference [2] is a good, compact but complete introduction to MacOS Monterey. For experienced folk, you’ll want a copy of Reference [3] available. M-Series recovery procedures have changed significantly relative to the Intel Mac series.

Tools Used

- Discovery.app Version 2.1.0

Monterey on Apple Silicon

Apple’s decision to design its own processors has paid significant performance dividends to the product line. The new M1 processor is comparable in performance to the Intel Core products used in Apple’s legacy product line. The performance improvement comes from several sources, notably

- The ARM instruction set architecture

- The bus fabric that connects the high speed subsystems of the computer. The solid state secondary storage is a bus fabric device rather than a PCI Bus device.

- MacOS 11 and 12 architectural improvements resulting as Apple retires legacy software components and debuts new components tuned to the new graphics and machine learning architectural components.

Immediate Impressions

- Monterey is fast on Apple Silicon. Audio and video trans-coding is much faster. Photos plugins are much faster. BlackMagic Design DaVinci Resolve is much faster. XLD is much faster. Any application using vector and matrix operations is much faster than on the 2017 Core i7 iMac.

- Monterey is much better at mounting network shares than earlier releases.

- Monterey starts faster and system updates are faster. System initialization code and hardware appear better aligned. M1 iMac connects the storage directly to the processor bus fabric. Secure enclave processing to verify MacOS signature and decrypt the system image and system tasks is much faster.

- Monterey no longer does 32 bits.

- Kernel extensions (KEXTs) are on the way out.

- ZSH is now the default shell. BASH is retired.

- Monterey has a bit of a reputation for exhausting main memory. Applications fail to launch, making the culprit harder to identify.

- Monterey Time Machine uses CIFS shares now. Apple File Protocol has been retired.

- The startup chime is back. It is on by default but may be turned off. Adjust it in Sound Preferences.

- Monterey is able to run selected iOS applications. This requires the developer to build the application properly to set the supported platforms in the image header. It also requires come care in selecting UI widgets that work with touch screen, mouse, and touch pad. Most publishers are retesting their products with MacOS before marking them for use on MacOS.

Hey, Apple, Time for ECC memory!

If I let my Mac sleep over night (see Sleep below) I occasionally return to find a kernel panic (rare) or an application hang (often). Today it was Firefox that had been awake over night until it became unresponsive (a zombie in UNIX vernacular). Apple, you really need to start using ECC memory in all your machines that run on shore power. It doesn’t cost that much more and machines are so much more reliable.

In recent weeks, several of these died in my sleep events have happened to this Mac. It has half the memory of the Xeon E3 machine that runs TrueNAS core. Both operating systems are built on a FreeBSD foundation but my TrueNAS core machine has ECC memory. It just cruises. It will tell me when it has recovered a memory error and won’t panic until one can’t be recovered in kernel code or kernel storage. This beast just keeps on rolling.

Given that MacOS now does significant user house-keeping over night (mail, photos, Time Machine), it is convenient to leave the machine to nod off to sleep rather than logging out or shutting down.

Even when logged out, the machine can be busy running a build in the background, taking part in GIT transactions, etc that run as batch jobs or system daemons (UNIX term of art for service processes) that use the networking. Apple wisely includes ECC in the MacPro. I’d really like it in my iMac. I don’t need the big processor pool or GPU pool. The little 24 inch M1 iMac is pretty much just right other than not having ECC.

MacOS Rosetta

Rosetta is Apple’s IA64 emulation architecture. Rosetta makes it possible to run older MacOS IA64 binaries on Apple Silicon. The Rosetta system uses just in time translation of the IA64 binary to Apple Silicon instructions. Apple Silicon is so fast that Rosetta translation is not really noticed. Apparently, Apple caches the translated image so that the translation overhead is a one time thing. The translated image is usually faster than the original IA64 image. This is not surprising as complex IA64 instructions can be treated as macros and expanded into efficient ARM V9 code. The Apple Silicon M1 is wicked fast and well suited to this sort of emulation.

MacOS Sleep

When a user has been inactive for a period (settable) or the user tells MacOS to sleep, it goes to sleep. Sleep is an active state. MacOS is still receiving mail, running Time Machine, and doing other house-keeping. Apple recommends that you let your Mac have an occasional nap so it can keep up with these tasks that have user context.

When you log out, MacOS runs down and stops all of the active processes owned by that user. Logging out will not allow Time Machine to finish its work. So allowing some sleep is good. I imagine iCloud exchanges also happen, particularly synchronization of the local master photo library with the iCloud photo library.

Application Comments

Apple has made improvements through out the included application set. Here, I’ll mention some of them that I find noteworthy and those I find need further refinement.

About This Mac -> System Report

System report uses a tree view to show the hardware and software configuration of the MacOS host at the time a side-bar topic is opened. Once the information is gathered, the item is static so you can read it. This choice prevents thrashing but requires closing and reopening a topic to discover the effect of a change.

I recommend spending some time poking around in the System Report. It shows lots of good information in addition to bus topology and connected devices. For example, you can discover the camera raw parsers provided in Photos or all of the available fonts on the system, how they are encoded, and who provided it and who holds the copyrights and trademarks. If you need to know something about your Mac that is somewhat obscure, this is the place to look.

System Preference -> Sound

Sound System Preferences is dynamic. Its Output display is organized into two sections, local bus sound resources and network resources. These sections are dynamic. The local resources is the place to look for USB sound plug and play changes.

Sound System Preferences network section is an abbreviated list of the AirPlay devices that are detected. This list is usually not complete. For example, mine is missing 3 of the 4 RoPieee Shairport devices on the network.

AirPlay Control

AirPlay controls appear in applications, the system summary menu bar item, and various applications. As with System Preferences Sound AirPlay section, the list of remote devices is inconsistent from application to application.

Is something going on with Bonjour item time to live? as all should be advertising themselves in Bonjour. Looking at the Bonjour service publications using Discovery.App, the AirPlay chooser rendering is consistent with the AirPlay advertisers shown by Discovery.

Later, I discovered that one of my Raspberry Cobbler AirPort receivers had stalled. This was traced to an unseated Micro SD card.

Protect Mail Activity

Over the last several years, I’ve toyed with AirMail and Spark mail clients and I’m in the process of de-Googling my Email. I like the pretty faces of the third party apps but find the enhanced user experiences a bit annoying so with Monterey, I’ve resumed using Apple’s Mail.app.

With Monterey, Apple changed the behavior of Mail to reduce the number of loopholes by which you can be tracked. This includes new switchology in several places including Mail preferences, iCloud settings, and iOS settings. Basically, Mail now loads remote content using an onion network of proxies. This works transparently some of the time and not at all at other times. Apple gives you a button to directly pull missing items. Said button is hidden if all missing material could be fetched via the onion proxies.

Apple does a commendable job describing the intent of Protect Mail Activity and the switchology for enabling it. Apple’s description implies that mail imagery will be downloaded in an anonymous manner to prevent tracking. Most mail containing images loads like this.

This example is an Apple Store product shipping message. I was expecting messages from well known and behaved sources such as Apple to download without user action.

One good side effect of this is that it is quick to sort out useful messages from adverts. Most adverts, even from the symphony and the arts festival have no textual content. Everything is in the posters chucked into the message.

Notifications System

Apple has made the notification system more considerate of the user’s likely activity at notification arrival. Apple calls the feature Focus. How Bay Area can you get?

Focus lets the user create named user states like Personal, Do Not Disturb, Work, Sleep, and Driving. Those just mentioned come pre-configured and may be renamed. You can add and remove additional states as needed. Your Macs and iThings share a common set of Focus states and permissions. It is necessary to configure Time machine only once and all devices logged into your iCloud account will reflect that configuration.

For each named state, Apple lets you specify the applications whose notifications will be delivered and from whom calls and messages should be forwarded. A contact group may be picked for this filter. You can also choose everyone, and noone. iPhone focus will add frequent callers to the allowed contacts set. You can configure automatic activation of the focus mode by time, location, or application launched.

There’s no notion of a state machine and state transitions. Rather state entry is manual, conditional on time of day interval, conditional on location, or conditional on application activation. Care is needed to keep the state entry conditions orthogonal to prevent thrashing between two states.

Interestingly, Apple chose to allow configuration sharing across devices signed into an iCloud account. Apple allows each device to have its own state but Apple did not choose to allow you to create states associated with the use of a MacBook, connecting to a WiFi network not your home network, or being in a particular place or not. I could see a business folk making customer calls needing working in the office and working out of the office states to change call and event filtering to be appropriate for being with a client. Or at the library studying. Physicians and therapists may have similar needs when with a patient.

Focus is particularly helpful in suppressing untimely notifications while meditating or sleeping. It seems to work quite nicely. The initial configuration at first launch is minimalist. You’ll need to configure applications and contacts permitted.

Apple says little about time-critical vs routine notifications. I would imagine calls are time critical but most other things should be routine.

Photos App

Version 7.0 of Photos is the best yet. When used with iCloud photo library, and keep originals on this Mac, it solves the problem of keeping images from multiple cameras straight. At least, for a single user. I imagine it would do equally well if each member of the household were using their own login and iCloud account.

I particularly like the way Photos curates photos to make the doom-scrollable cover view of a section of the library. I’ve taken to using Photos App rather than the File chooser to pick images for the blog and Twitter posts.

Photos is brilliant at cropping images. Photos uses automatic cropping to make the time-based library views. It almost always keeps the subject and trims off the excess.

Photos is also brilliant in its ability to open external editors that use the Adobe Creative Suite assistant application interface. Here’s the tricky bit. Most of my photography is iPhone snapshots around home and the neighborhood of weather, the garden, and the hounds. But there are times when I get the big still camera out. Nice as the big camera is, it is no match for the Sony with its big lenses. Photos 7 nicely supports the Sony and will keep raw images and JPEGs. I would like it to send the raw image where available to the external editor.

I do most of my editing in Skylum Luminar AI. Skylum boffins were the developers behind the old Snapseed App and Nik Collection of Lightroom editing plugins. They have moved yet again and are continuing their artificial intelligence driven automatic editing development. The current Luminar series of products works brilliantly with Apple Photos App.

What Photos 7 does not currently support is the ability to make multiple renderings of an image. For example, you might wish to use different render settings or make a crop to exploit an image within the image. To do this now requires you to duplicate the image in Photos and make the alternate rendering of the duplicate. Would it be possible to have a tree of alternative renderings? And could we keep some notes with each image to recall why we made the rendering?

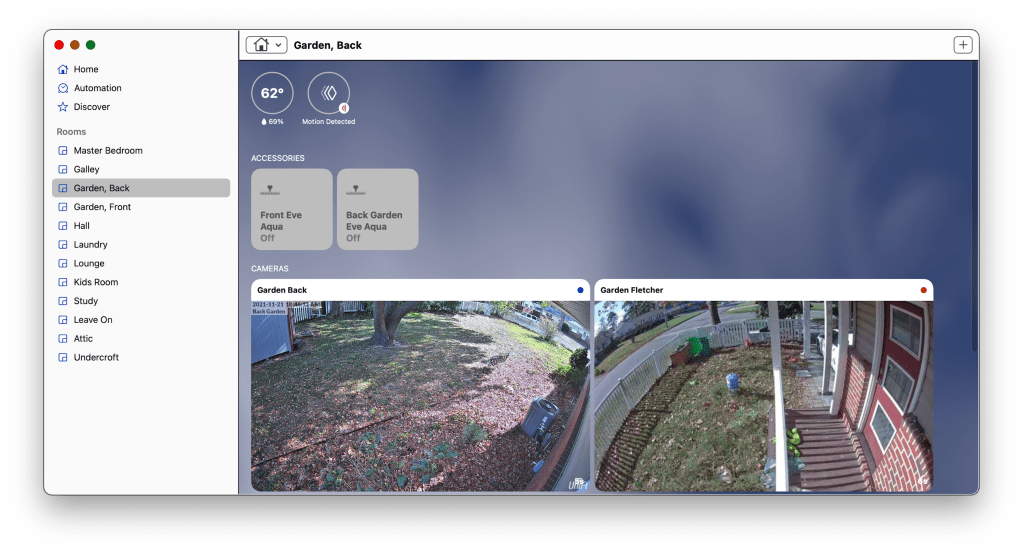

Home App

I’ve become a fan of Apple Home App, mostly. I dipped a toe into the water a couple of years ago by ordering some EveHome.Com door sensors to log opening and closing of the two entry doors. The primary idea was to review the event log to determine when an unauthorized entry occurred. Knowing the time would help with the police report and locating the unauthorized entry in the video logs.

Since then, I’ve added Apple HomeKit Secure Video cameras to watch the side porch landing and greyhound gallop at the fence. These cameras are the dogs door bell. They throw an animal sighted event when the dogs come up on the porch pad and I can go to the door to let them in. This all works nicely.

The cameras we use are from Eufy Life and are specifically designed for use with HomeKit. I also have some Ubiquity UniFi Protect Gen 4 cameras that take archival footage. The Ubiquity cameras can be configured to stream to HomeKit but do not currently support HomeKit secure video.

Since then, I’ve added a bunch of Eve Home weather sensors to assist me with setting the heating and air conditioning set points. I also have a pair that I use to track the attic and crawl space temperature and humidity so I can respond to excessive moisture in those spaces. These were a help in deciding to encapsulate the crawl space and add de-humidification. These sensors also clearly show the air con duct leakage in the unconditioned space that is about to become conditioned but uninsulated space.

I find the co-mingling of Music audio with MacOS/iOS audio to be problematic. If I’m using my iPad as an Apple Music controller while, say reading News, and the news has embedded video, the system suspends my music to start rolling clip audio. This is really an issue which results from automatic media playing. Just try looking for a Netflix title for the evening while listening to new jazz. The experience is doubly horrible for contending with the Netflix haystack and the interruption of the recording by auto-played trailers, usually of endless explosions and car crashes.

Hey Siri, do you take requests?

Some things I’d like Apple to add or alter. And where would be we without Siri? Probably not in the Bay 🙂 Apple TV Siri is the only thing keeping Apple TV usable. Siri search finds the things the hideous UX keeps burying. More about this below.

Time Machine

Time Machine is finally sorted for backup to network attached storage. Here at Dismal Manor, Time Machine backs up to a TrueNAS Core server. The Time Machine destination volume is an encrypted dataset in the TrueNAS primary pool (the big one). Time Machine is configured to encrypt the backup in transmission. The encrypted stream is encrypted again for storage so things are pretty opaque.

I keep my keys in 1Password, both my Time Machine key and my TrueNAS key. TrueNAS also keeps the keys in the configuration data that I back up to iCloud from time to time. TrueNAS lets you recover the keys provided that you can log in to the running system. I believe TrueNAS keeps the keys on the boot disk and not on one of the data volumes. I use 1Password for this important task.

Since TrueNAS 12.0 and Monterey, I’ve had no trouble with volume mounts. MacOS manages to mount the volume for Time Machine without me mucking about. This is a big improvement over earlier versions.

1Password

1Password is one of the earlier third party password managers. It is adept at password generation and storage and integrates with web browsers for capture of new account creation. MacOS now allows use of a third party password manager in some roles originally filled by Keychain.

I’ve started using 1Password for 2FA and management of system encryption passwords because I’m familiar with it, I believe it to be reliable, available, and survivable. 1Password offers the following advantages over alternative messages.

- All items are encrypted using AES256 strong encryption

- AgileBits keeps a copy of my data (encrypted, of course)

- AgileBits has mechanisms for account recovery should I ever get into a chicken and egg situation.

- 1Password supports 2FA and can compute 2FA time-based tokens for your 2FA accounts.

- 1Password browser plugin allows use of a Raspberry Pi 400 for account recovery.

- 1Password supports password escrow for estate executors. Passwords can be organized into groups and role based user access granted to each group.

Applications needing further refinement

Apple continues to struggle with some aspects of several of its applications.

Apple TV and Apple TV+ App

Recently, the Accidental Tech Podcast folks gave Apple TV and Apple TV+ a thorough hiding. Apple has done a horrible job with the UI design as I will explain below. Apple has also done a horrible job introducing the product to the market.

- Stars in Apple TV+ programs say, “Its on Apple”

- “It’s on Apple TV? I don’t think I have that. I’m not ready for a new phone.”

- Even Accidental Tech (3 free lance Apple developers and tech writers) have trouble using Apple TV app and Apple TV+

- They have the same experiences I describe below where they can’t find what they came to watch.

Kudos to Apple TV for finally getting dictated search items to work. And the video quality seems to be pretty good with the latest Apple TV. Voice search is an essential feature to mitigating the horrid user interface.

Apple keeps looking for solutions to the needle in a haystack problem. Apple TV was supposed to sort it. Instead, Apple TV app greedily adds any program you’ve touched to your watch next. Which is really bad. To determine if something is interesting requires rolling the trailer. Touching the trailer or the program notes adds the program to Watch Next. You decide not to watch, but it is in your watch list. So you have to weed the watch list to weed out the greedy additions.

Apple TV also promiscuously pitches new releases to you while you are struggling to see older programs. Each evening, the home screen and your watch list look radically different than when you last used them. It is hard to follow a series. It’s necessary to keep reminders and use search to see your stories!

Music App

Music 1.2 is still a horror. Addition of Apple Lossless streaming capability is a big improvement but the app still has the architectural problems of its ancestors. I much prefer the Roon Labs, https://roonlabls.com/, library, controller, rendering agent system design. The Roon core does all program streaming from your library and subscriptions to one or more rendering agents. A Roon controller lets you manage your library kept on the core and your track and album lists at Tidal and Qobuz. It works very nicely and titles play seamlessly.

Apple Music information about artists and their work is week compared to even Qobuz, Roon Labs gets this right in its Roon product. Apple Music is really poor at pitching similar artists and recordings.

There’s no reason Apple couldn’t adopt this same architecture using a MacOS daemon to stream music or an Apple TV device hosted daemon. Apple would have to make provisions for iOS only deployments as many Apple Music users have only an iPhone. This is probably Apple Music’s big money use case. About 100,000 of us care enough about music reproduction to use Roon.

Photos App as visual asset manager

Professional photographers have stringent requirements for organizing and retrieving images that center around their client driven business needs. They need to keep images separate by client and engagement and to organize them within each engagement to track which had been rendered, which were submitter to the client for review and approval, which the client has approved, and which were used in a graphic arts or video project.

I used to use category tags to try to recover my images. That meant that I had to tag everything. I quickly developed a set of tags that were ambiguous, included misspelled versions of a tag, etc. I never really used the tags for retrieval. What if Apple used its picture classifiers to tag photos in some ontologically sound manner. It could take at import and we could adjust at review. The ability to filter the library view geographically and by subject tags would be quite helpful. Much more than looking for dick pics, Uncle Tim.

Video Asset Manager needed

There’s currently no good video asset manager on MacOS. Movie has become the forgotten child. BlackMagic Design Davinci Resolve does not integrate with the Photos media library which is deliberately opaque. Something is needed here that is more than shoebox of clips but still hobby-oriented.

You must be logged in to post a comment.