TrueNAS is built on FreeBSD and the ZFS copy on write filesystem originally developed at Sun Microsystems for use in petabyte scale systems, possibly with cluster filesystem support layered on top. Today among hobbyists and system integrators, TrueNAS finds use in small scale file servers in home and office environments.

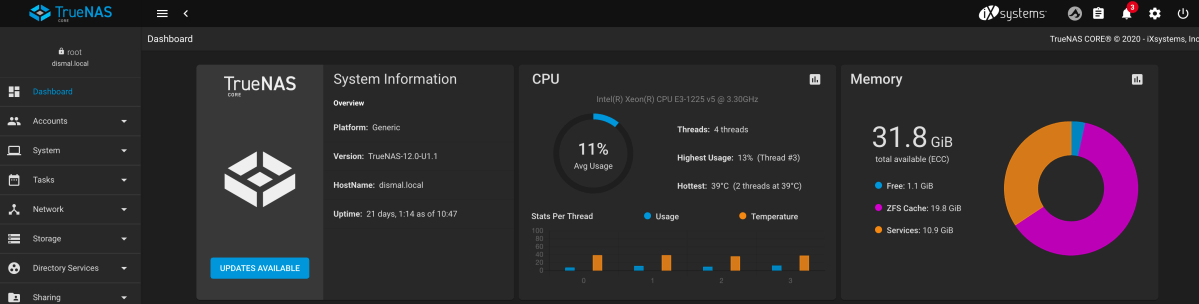

Purchased storage systems from iX Systems (are you a Dune fan?) make ZFS and TrueNAS FreeBSD accessible to small organizations needing reliable shared file storage. It is ideal for small medical offices and graphic and creative arts professionals needing working and archival storage. The TrueNAS SOHO systems are price competitive with home brew from new systems as a result of volume purchase of components. TrueNAS carefully tailors the FreeBSD component selection and system configuration for the storage appliance mission. In most installations, TrueNAS functions solely in the storage role. In home and small office installations, it may also provide some application support. Here at Dismal Manor, our TrueNAS system also runs our Roon Audio service instance in a VM.

The capabilities of TrueNAS make it the superior choice for this application. The stand-out feature is check-summing of both file metadata and file data allowing TrueNAS to validate data at rest. TrueNAS will detect, report, and correct data bit rot.

It’s all about timing

When I was looking for a NAS to replace my Drobostores, the Linux NAS storage market was in flux. Most Linux storage systems were based on kernel RAID using the EXT4 filesystem. EXT4 is a journaled filed system designed for simplicity. RAID and LVM were separate and check-summing happened at the block level.

At the time I built my server (2017), Netgear was transitioning ReadyNAS to BTRFS (said butter-fuss) but some models were and some not. Since then, Synology has transitioned its current (2021) products to BTRFS.

BTRFS, developed by Oracle, is intended to have feature parity with OpenZFS and Oracle ZFS. If buying from the Linux NAS vendors check the fine print regarding software versions and beware old stock from sellers. At this time (winter 2021), all products should be BTRFS-based. BTRFS CRC checks both metadata and file data and offers snapshot and replication features similar to those in ZFS.

This is a CONOPS Article

In this article, I’ll describe TrueNAS replication operational concepts and several uses of snapshots with replication in the SOHO environment. We’ll look at local backups, off-site backups, and new system data initialization. This article deliberately avoids implementation switchology as iX Systems may change it from release to release. The TrueNAS Guide is very good and continuous improvement.

Since I originally set up replication on FreeNAS 9, life has become much easier using TrueNAS 12. The TrueNAS 12 user interface has eliminated most of the friction experienced when making the replication initial setup in 2018. iX Systems wizards have established dialogs that support the most common use cases. In most cases it is no longer necessary to explicitly create the snapshot task, snapshot schedule, and replication tasks. Creation of a TrueNAS 12 replication task using the standard dialog will create the supporting snapshot task and snapshot schedule.

This post aims to guide the novice admin through the workflows needed to configure basic internal replication and external replications independent of the applications of the replication results.

Revisions

- Revised to add a section “Replications for Backups” and added the setting needed for that use case.

- Revised the intro to make it clearer what we were talking about, TrueNAS, ZFS, and ZFS replication.

- Revised to mention that 2021 ReadyNAS and Synology NAS offerings now use BTRFS rather than Linux LVM, Kernel RAID, and EXT4 filesystem.

- Correct mistakes regarding ZFS recursion.

References

This article describes the capabilities of TrueNAS 12 which is built upon FreeBSD 12 with a significant storage system management environment added to the core system. iX Systems has written an excellent system Guide that describes the configuration and operation of the system. Please consult the Guide for all system operations tasks.

- https://www.truenas.com/docs/hub/tasks/scheduled/snapshot-scheduling/

- https://www.truenas.com/docs/hub/tasks/scheduled/replication/

- http://storagegaga.com/ransomware-recovery-with-truenas-zfs-snapshots/ (Windows 10 example)

- https://docs.freebsd.org/en/books/handbook/zfs/#zfs-term

- https://youtu.be/XOm9aLqb0x4 Tom Lawarence on ZFS Replication in TrueNAS 12.

- https://arstechnica.com/information-technology/2020/05/zfs-101-understanding-zfs-storage-and-performance/

ZFS Storage Model

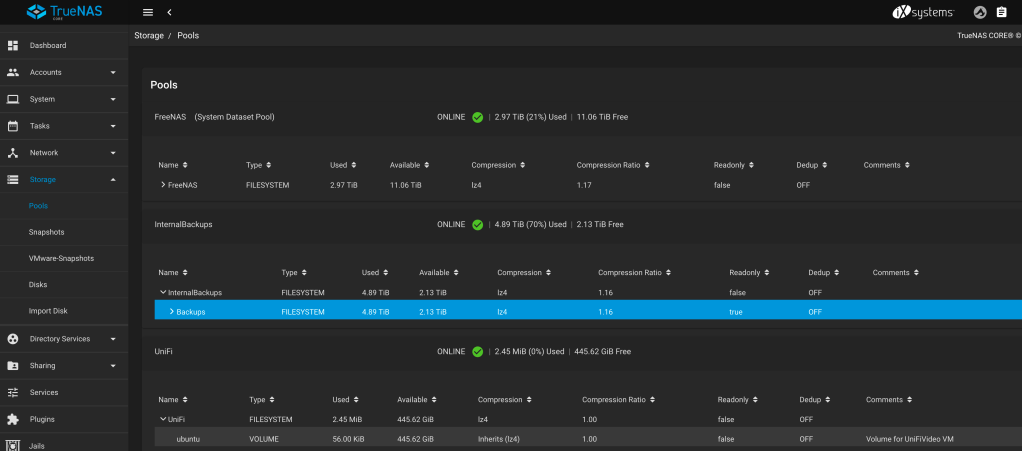

ZFS is very flexible in its ability to represent storage. A zpool is a virtual block device built up from vdevs which are groups of physical volumes treated as a logical unit.

A ZFS pool contains one or more ZFS datasets. Dataset is an umbrella term for the several storage structures a zpool may contain. Two, the volume and the filesystem are currently in use. A volume has a set maximum size. A filesystem can grow to fill the zpool containing it. Zvol are most commonly used as containers to hold a virtual machine’s storage.

Unless otherwise configured, a volume or a filesystem inherits most of the characteristics of its parent container.

Interestingly, ZFS allows datasets to contain datasets. Administrators use this capability to impose some organization on top of the data. For example, a dataset named home may contain user home directories. Another might contain git repositories or in our case media with datasets for photos, music, and home videos.

Although the dataset hierarchy appears superficially like a filesystem directory hierarchy, it is different. ZFS recursion is the traversal of the dataset hierarchy to pick up the contained datasets. If a dataset has no children, ZFS dataset recursion is not necessary but may be safely enabled.

Pools also combine physical volumes into a larger logical volume. Depending upon the pool configuration, the user data can be mirrored or stored with single, double, or triply redundant data. A physical device can be replaced with one the same size or larger. ZFS will recreate the content of the original media on the replacement media. If a larger device is provided, some space will be lost until all devices are of the larger size.

A dataset spans each pool. That dataset may contain both filesystems and volumes. Volumes are sparsely provisioned but have an upper size limit. They typically represent a partition or slice of physical media or virtual media. A volume may contain one or more filesystems.

Filesystems contain either volumes or filesystems as noted above and are hierarchical. This gets a bit confusing but ZFS keeps it all straight.

Snapshots capture the current state of a dataset. They are taken on the live dataset as data is being written and read. A snapshot can be serialized to a new pool and dataset and the destination may be either local to the source TrueNAS system or sent to a remote TrueNAS system, pool and filesystem.

Snapshots and Replication

Snapshots and Replication are like peanut butter and jelly. They were made to go together. A snapshot describes a read-only version of a filesystem at a point in time. The snapshot is a compact encoding of the base version plus the changes made between snapshots. It allows the storage resource to be reconstructed in that state. When replicated to independent storage, the snapshot series serves as a sequence of backups of the state and contents of the pool or data set (filesystem).

Copy on write filesystems like APFS, BTRFS, and ZFS record filesystem changes to a journal as as active processes make them queuing the changes to be committed to disk. Another volume management process reads the journal and writes the changes to the disk resident data structures. If operation of the system is interrupted, the filesystem can be brought current by completing application of the queued transactions in the journal. This journal scheme replaces static auditing of the filesystem as part of the system recovery process. Conceptually, each snapshot is a serialization of the changes to the dataset since the associated checkpoint.

Replication is the process of transmitting and storing a snapshot from the snapshot source to a replication destination, usually a second internal pool and data set or a pool and data set on a second TrueNAS server. Replication of a snapshot is a good way to initialize the data on a newly commissioned TrueNAS server or to transfer data to a second pool that serves as a backup of the original.

The use of a snapshot sequence allows the saving of evolving versions of works in progress. If a work object is lost, an image from the most recent snapshot or any earlier snapshot of the object (directory content or file content) is possible.

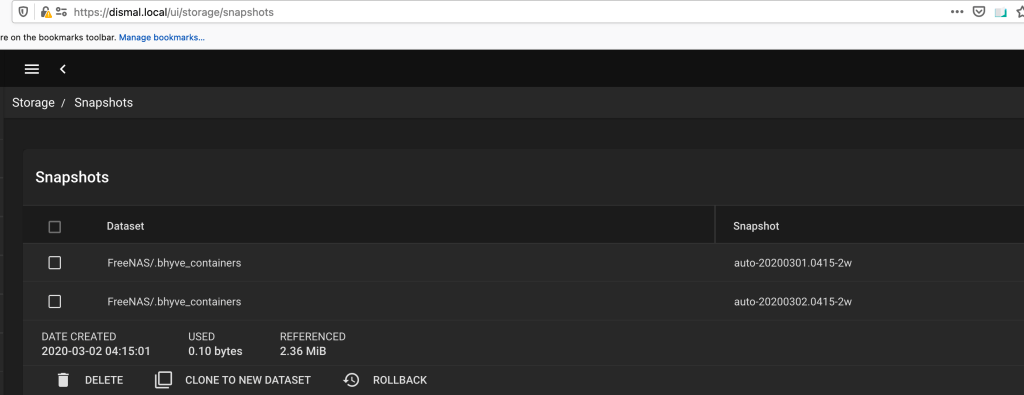

Snapshots

Snapshots are a compact representation of the state of a filesystem. The first snapshot describes the initial state of the filesystem. Each subsequent snapshot represents the state of the filesystem at a later point in time. The snapshot is a journal of the changes made since the previous snapshot. Or if you will, it is the serialization of the changes made since the last snapshot. A snapshot specification tells ZFS how to make snapshots of a filesystem.

I suspect ZFS makes the snapshot on the fly and closes it at the end of the snapshot interval (time to start the next snapshot). Once complete, the snapshot triggers the replication.

Several things make up a snapshot description. Each snapshot specification has a content specification that describes the filesystem to be check pointed. It has a snapshot schedule that specifies how often snapshots are to be taken. And it has a sequence of snapshot datasets that encode the original snapshot and the incremental changes between each snapshot point in time. If the snapshot is replicated on a second machine, a TrueNAS SSH connection entry describes the connection and a TrueNAS SSH key pair entry at the destination provides the necessary public key which is kept locally.

The snapshot specification describes what is to be captured. Snapshots can be “recursive” or not. A recursive snapshot includes each child filesystem and its children much as a recursive directory listing contains each child directory and its children.

The snapshot specification also specifies the number of snapshots to be retained. When the limit is reached, the oldest snapshot is folded into the initial state of the file system and the space the snapshot occupied is freed for reuse.

TrueNAS can list the available snapshot images and can perform the following operations on the selected images. It can

- clone the dataset or pool to local storage in the state described in the snapshot,

- it can restore the dataset to the state described in the snapshot (a rollback operation)

- or it can delete the snapshot

Note that snapshots are part of the pool or dataset for which they are defined. To have the data appear in a different place, a replication task must be created to move the data.

Replication

Replication is the process of merging the snapshot into the destination filesystem. The destination system applies the serialized changes to its local copy of the destination filesystem.

TrueNAS 12 supports simple replications and advanced replications. Simple replications permit a simplified setup shown below. Advanced replications setup allows access to all of the elements of replication (snapshot, source and destination, schedule) and to all of the options for each element. Most users will use the simple replication dialog.

Replication at Dismal Manor

The TrueNAS documentation and associated user interfaces have been greatly improved in moving to the TrueNAS 12 UI. There is now an express setup for local replication. Local replication allows TrueNAS to keep an image of a pool or dataset in a local pool added for backup purposes. Dismal, the manor’s home server, keeps an internal backup on a single 8 TB storage rated disk. This disk has no redundancy but it has full data and metadata CRC checking and CRC verification just like that in the doubly redundant main pool.

Dismal currently replicates the FreeNAS pool to the Internal Backups pool daily at 0000R. This is a local replication. We’ve never placed the Unifi Video volume into production. Unifi Video and later, Unifi Protect run on a separate Unifi appliance.

Replication for backups

When configuring a replication as a backup replication, set the option to enable Full Filesystem Replication. This setting results in all essential attributes being saved. Per the tooltip for the setting

“Completely replicate the selected dataset. The target dataset will have all of the properties, snapshots, child datasets, and clones from the source dataset.”

Local Replication

TrueNAS provides the Local Replication mechanism as a quick and easy means of setting up replication from a primary pool to a secondary pool both residing in a single TrueNAS server. Local replication uses the local transport. The dialog can configure local transport without further user assistance. Local Replication is intended for backing up a filesystem internal to the server on which it resides.

Dismal Manor uses local replication to make local backups of the primary pool’s outer filesystem. We have about 2 TB of data in the primary that we snapshot and replicate to the secondary. When setting up the snapshot time to live, you need to be mindful of snapshot retention time so as not to overflow the secondary. The Local Replication dialog handles creation of the snapshot data specification, the snapshot schedule, and the replication target specification and associated replication task. Manual snapshot specification is no longer needed.

Setting a finite retention time causes the aging out snapshots to be incorporated into the starting image and the snaphot data to be deleted. So the retention time specifies the time window back from now in which it is possible to restore any prior saved state. Before the retention window, only the initial state at window start is available.

Local Replication is Good

The job of local replication in the Dismal Manor grand scheme of computing is to provide a local backup of the primary storage pool in the unlikely event that 3 disks break before the primary can be repaired and reconstructed. It is not intended to protect the system against memory issues (ECC does this job) or a colicky CPU (processor reliability, availability, and serviceability capabilities do this job). Local replication proceeds at hardware speeds and takes advantage of transfer caching. Remote replication proceeds at SSH line speed and is subject to contention with playing videos and music or some feeble minded IOT device going mental.

Remote Replication

TrueNAS 12 also provides remote replication. A remote replication operation is the process of sending a replication snapshot from the primary machine and pool to a secondary machine and pool using a network connection. The secondary or receiving machine may be on the local network, at a friend’s house, or in a commercial service such as BackBlaze.

Remote replication can be used to initialize a newly commissioned TrueNAS server or to make colocated backups to a second TrueNAS server. Or to make backups over the Internet to a remotely located server.

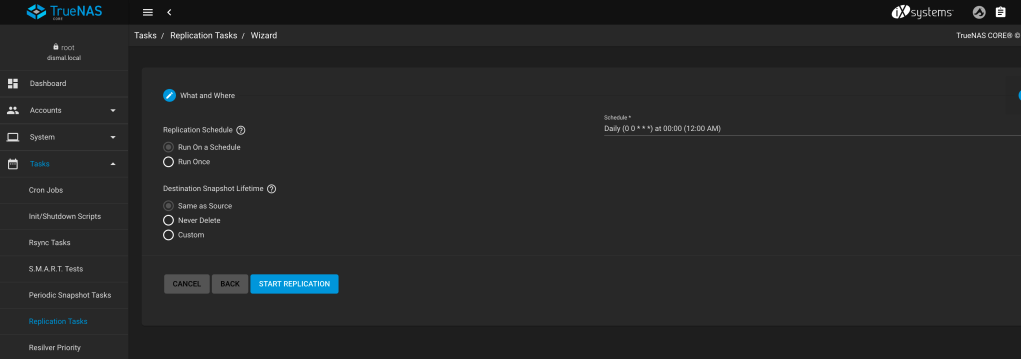

The Replication Wizard

The replication wizard in the Tasks UI is your friend. It will guide you through the steps needed to create a successful replication task.

Most importantly, it lets you browse the source and destination pools to choose the data to be sent and the dataset to receive it. The destination directory need not exist but may need to be explicitly named. When the initial replication occurs, ZFS will create the destination filesystem.

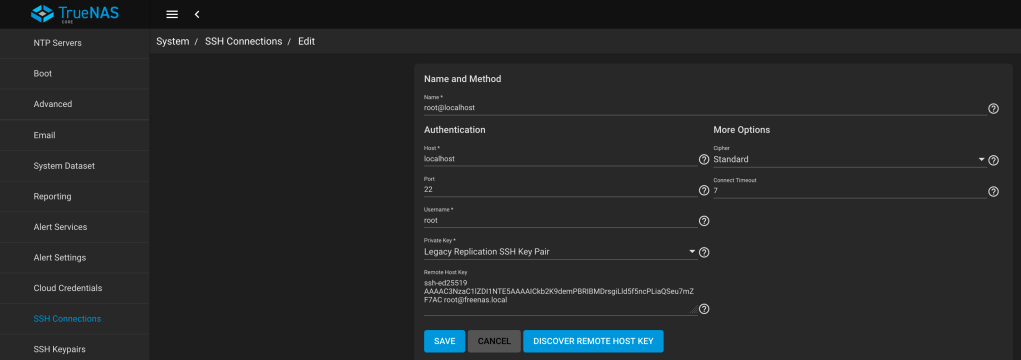

The replication wizard also lets you choose the transport used to transfer the snapshot to the target. Most often, this is an SSH tunnel created for the purpose. The replication wizard will assist you in choosing the tunnel from those available. These choices must first be created using System -> SSH Connections tool.

The replication wizard will let you specify recursive traversal of the source dataset’s contained datasets. You can select a subset of these to be transferred.

The replication wizard will let you specify if the data is to be encrypted at rest. Importantly, the source system can store the encryption keys used and export the keys. But it is wise to keep keys in BitWarden or 1Password so they don’t go missing.

Creating a local backup replication

In TrueNAS 12, you can duplicate and modify an existing replication or allow the replication dialog to create a snapshot for you (recommended for simple source specifications). First, create the replication and its associated snapshot as shown below.

Setting the Replication Schedule

Then create the replication schedule. A replication my occur once or periodically with some number of snapshots to be retained. The Next button takes you to the schedule form shown below.

Remote Replication SSH Authentication

Remote Replication requires that the target machine supports SSH authentication of the source clients. The new UI simplifies the procedures for establishing the SSH connection by moving it out of the replication dialog. Create the SSH connection first, then create the replication.

- Generate the SSH key pair on the destination machine.

- Switch to the source machine

- Create the SSH connection (in System)

- Click the button to schlep the destination machine’s public key to the source machine.

- Save the connection.

The source machine will use the destination machine’s public key to authenticate the connection and to secure the transfer.

Encrypting the Destination Dataset

With the new TrueNAS 12 system, creation of backups that are encrypted at rest is almost simple. The target pool must be encrypted. The results set filesystem and each individual snapshot replication will inherit the encryption of the pool. So this takes some forethought when creating the pool. The pool volume must be created with encryption enabled.

The encryption question appears at the start of the create pool dialog. The dialog will ask for or create a key. Let TrueNAS create the key as it knows best practices. AES-256 keys are recommended. The key is stored as part of the system parameters so be sure to back these up to a secure location and off-site . You will need the key if the pool is to be moved to a new system. I don’t believe the key is part of the pool’s metadata (for obvious reasons).

The TrueNAS 12 Replication UI will let you specify an encrypted target in a plain-text pool but the transfer will fail with the error that the encryption is different than that of the pool. The message in the alert Email is below. The quoted text is the names of the filesystems used as the replication source and replication destination. Note that a filesystem spans each pool.

Replication “FreeNAS pool to encrypted Internal Backups pool ” failed: Destination dataset ‘InternalBackups/Encrypted Backups’ already exists and is it’s own encryption root. This configuration is not supported yet. If you want to replicate into an encrypted dataset, please, encrypt it’s parent dataset.

TrueNAS 12 does not allow nesting of encrypted file systems so encryption must be applied at the pool level and inherited by all containers in that pool.

You must be logged in to post a comment.