Long time followers of the Dismal Manor Gang know that Dismal Manor has a thing called a WayBack Machine. You may know that it has something to do with photographicals we dogs wish were long forgotten but you may not know much more about it. It is no where near as magical as Mr. Peabody’s WayBack Machine appearing on The Adventures of Rocky and Bullwinkle. The original was a cartoon mortal’s attempt at a TARDIS but worked only for planet Earth.

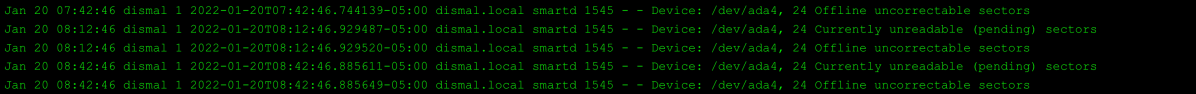

Our WayBack machine is a TrueNAS file server that stores our Apple Time Machine spool volume, our local audio media, and video clips. It is going wobbly. One of the disks, a brand made by sinners rather than the faithful (Stranger in a Strange Land), has presented with unrecoverable bad blocks. First there were 16, now 24, so it was time to replace the disk. Read on to learn how this went for us.

References

- https://www.truenas.com/docs/core/storage/disks/diskreplace/

- https://www.truenas.com/truenas-mini/

- https://openzfs.org/wiki/Main_Page

Revisions

- 2022-01-22 Added Murphy is in the house section detailing all the screw-ups along the way.

Home Brew or Order In?

Long time readers know that Dismal Wizard assembled the TrueNAS server currently in use back in the FreeNAS 9 days following hardware recommendations in the FreeNAS community forums. The build went well and the device has run largely trouble-free until recently. In the last week, it has begun to present bad blocks that could not be recovered by the on-going storage scrub.

As this server was approaching its 5th birthday, it was a senior citizen and the onset of dementia was anticipated. So off to PC Parts Picker to see if we could make an AMD RYZEN server. It was nearly impossible to sort out which motherboards supported ECC and which didn’t. The AMD RYZEN architecture did but the motherboard had to have the proper sort of memory sockets to accept ECC memory and had to properly field the ECC memory error exceptions when the chipset raised them.

The second issue we ran into was that parts pricing and availability were indeterminate. Once we found a part, it was difficult to establish its cost and availability. Once we assembled a reasonably complete parts list, its cost always totaled up close to that of a TrueNAS Mini. The big catch is that the cost of new storage (the purpose of the build) and the fact that we didn’t have a pile of used parts or professional server knowledge of enterprise cast-offs forces us to shop for each build. Since we had to shop, we found we couldn’t beat the iX Systems logistics boffins on parts price. Sure we would have had a hotter processor but our lowly E3 Xeon was more than enough. So rather than home-brew, we ordered in. Lead time is about 5 weeks.

What to do with the incumbent?

Our incumbent server, soon to be known as Peabody, will continue on in service. As disks go bad, I’ll replace them until all 6 are new Western Digital Red Pro storage array grade disks. When the TrueNAS Mini arrives, I’ll probably name it Sherman. After Sherman has its initial fill from, I’ll configure Peabody to be a replication target for Sherman.

Disk Replacement

It’s pretty easy as TrueNAS uses the ZFS file system, specifically OpenZFS, designed to provide robust large scale fault tolerant storage for Solaris UNIX clusters. ZFS combines physical volumes into logical storage devices (Zdev). A Zpool has one or more Zdev virtual devices and stores a mix of Zvol (virtual volumes) and Datasets. Any member of a Zdev may be taken off line and replaced without stopping the system. Once the new disk is installed, it is configured as a replacement.

Our cabinet was not hot-swap capable

Since we did not have a hot swap cabinet and hardware, I had to do it the old fashioned way.

- Take the failed disk off line

- Shutdow the server

- Do the wrench work to find and replace the bad volume

- Restart the server

- Tell TrueNAS to use the unknown disk as a replacement. TrueNAS takes it from there.

- Let the dust settle. It looks like it will be about a day to complete the volume reconstruction.

Finding the failed disk

TrueNAS uses SMART to track disk status. It catches the error reports and attempts to recover disk errors. If the error is unrecoverable, the error is noted and the failing disk’s serial number is noted. The serial number that is on the label. We had 3 WD Red in one bucket and 3 Seagate Iron Wolves in the second. The serial number told me it was an Iron Wolf disk. So I counted in order down the bucket determining which it was. I removed that disk and verified the label serial number against the logged serial number. They matched, so I put a new WD Red Pro in its place, buttoned the cabinet up, and powered up.

Rebuilding the Pool

Rebuilding the pool proved to be easy. The admin guide gave the steps but glossed over a couple of things. I replaced ADA4. When the machine came up, it showed a spindle, DA0, and the disks originally in the pool were renumbered. This was a bit of a curve. After rereading the Guide a couple of times, I told TrueNAS to use DA0 as a replacement. So now we wait while the volume is rebuilt.

The Volume remains usable

TrueNAS does the volume rebuild while the volume is on line. So TrueNAS is happily serving files and playing music while everything in the affected Zpool is sorted out. This is why you use TrueNAS. Recovery is really this easy.

Our Volume is ZRAID2

When I built the original system, I configured the pool as a ZRAID2 pool. This means that the pool has 2 sets of redundancy data. Data and forward error correction information are striped across the disks in the pool in a way that allows the pool to function if one or two disks fail. With 1 disk down, the pool is still error correcting. With 2 disks down, data block checksums will report data errors.

Why use double redundancy?

Double forward error correction is a recommended precaution. When the disks in a pool are all the same age, all are likely to go wobbly. One does. You replace it. During the volume rebuild, a second sometimes goes wobbly. Then you’re stuck if the volume was singly-redundant. For the small pools in home systems (10 or less disks per Zdev), ZRAID2 redundancy is considered adequate.

Larger TrueNAS systems make larger pools from multiple Zdev forcing a reasonable redundancy data granularity.

Why TrueNAS over FreeBSD

TrueNAS relies on FreeBSD and OpenZFS. and OpenZFS is found in an increasing constellation of Linux systems. Why TrueNAS. Simply, TrueNAS has a visual front end and Python based management interface that automates system configuration, system surveillance, and system maintenance. It also has a comprehensive user guide and the smarts to figure out the best use of the disks on the box. That lets a retired duffer like me assemble and manage a top-quality storage system.

Murphy is in the house!

Remember Murphy’s laws? Well, they’ve not been repealed in the Dismal Dominion.

- If anything can go wrong it will.

- If something can’t go wrong, it will anyway.

- When things appear to be going better, you’ve overlooked something

As usual, stuff happened.

Finding the bad disk

Finding the bad disk was easy enough. Just take each out of the disk cage and check its serial number. Remove the drive with the serial number of the bad drive.

TrueNAS does it that way because a drive’s FreeBSD device name can change if the cabling is switched around. Each drive contains metadata identifying the pool to which it belongs and which member of the pool it is. So, you rack out a pool and insert it in a different computer, import the disks, and FreeBSD will reconstitute the new pool and any volumes and datasets on it.

Installing the replacement

Installing the replacement can’t be hard. Remove 2 connectors and 4 screws, slip the bad drive out, slip the new drive in, replace the screws and connectors. At this point Murphy put in an appearance.

Oops, TrueNAS let me add a USB drive to the pool.

I went to the pool status and told TrueNAS to replace the bad drive. It asked me to pick its replacement. It nominated db0. Well, that was a USB device I had been using for backups. I got suspicious when I noticed its activity light going crazy and that the estimated resilver time was 2 days and growing.

USB drives are not suitable for use in ZFS pools. They go to sleep. They are slow. They don’t do SMART so FreeBSD does not receive bad block notifications, etc. So I offlined this drive and later have to clean up the resulting mess.

But something else broke.

I put the new drive in the place of the failed drive. But in doing that, a SATA connector on a different drive failed. The plastic shell broke and it kept popping off. There were no spare cables so I had to disconnect an unused internal drive to cannibalize its cable. So the internal 8TB drive is unavailable until the Amazon man shows up with a packet of replacements.

The new drive rogers up

So I have 3 drives in the rear bucket and 4 drives in the front bucket. So I should see devices ada0 through ada6. But I have only ada0 through ada5. There are only 2 of the 3 WD 4 TB Red drives showing up in a shell nose count. So one of the 4 TB WD Red drives from the original build is missing. The pool is still degraded. I likely have another connector off or a bad cable. Good thing replacement cables come in 3 packs.

So, what is the pool status?

With one of the original drives missing, I’m now in the two drives failed position until the resilver can restore the replacement drive. At that point, I have one of the 4TB WD drives to find. Then I have to count noses and sort the WD Red that is not present in the drive census. Finally, I have to reconnect and enable the 8TB drive I took down for the voyage repair.

While waiting for Amazon

At this point it appears best to let the first resilver complete. Resilvering is the process of synthesizing the contents of the drive that was replaced. We should have our cables by Tuesday so next week, open the box and verify the serial numbers of the WD Red 4TB drives still in the box and try to get the 4th back up. I’m guessing a cable is loose, either power or SATA.

The forgotten power connector

When the resilver completed, I shutdown the server and extracted the rear drive cage, flipped it over, and found that one of the 4TB WD Red disks was not connected to power. Power connector replaced, I put the machine back together and it is happily restarting. As I write this, it is repeating the resilvering process.

“I’ll never need a hot swap case!”

Those could be famous last words. When I built this machine, I considered drive replacement to be an infrequent evolution. Little did I imagine that it would be a 24 hour evolution! I saved a few bucks by doing the build in a Fractal 804 case. SilverStone has several “NAS” cases such as the DS380 that would have suited the build. Using such a case, I could have avoided the drama of the past couple of days as drives can be replaced without disturbing the drive power distribution or data cables. These cases are a bit more expensive fixed drive cases because they include a back plane for the storage and sled carriers for each disk. An 8 position case retails for about $350.